Image, Video, and LIDAR Labeling

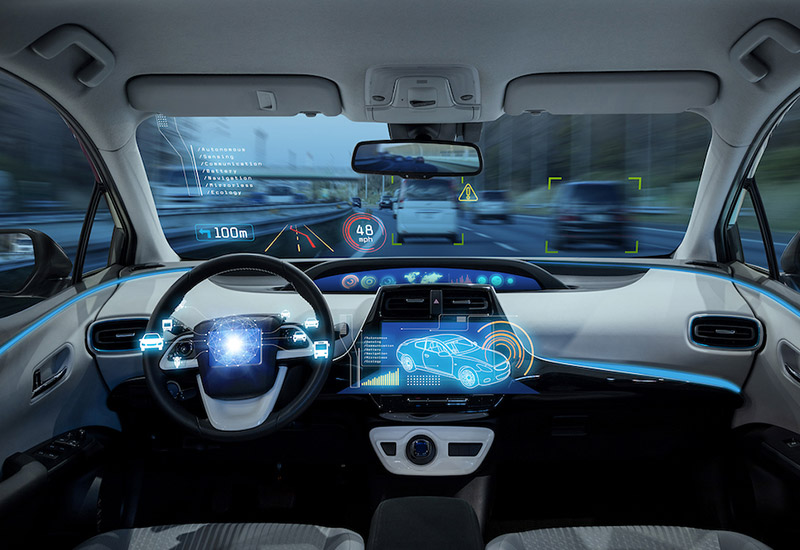

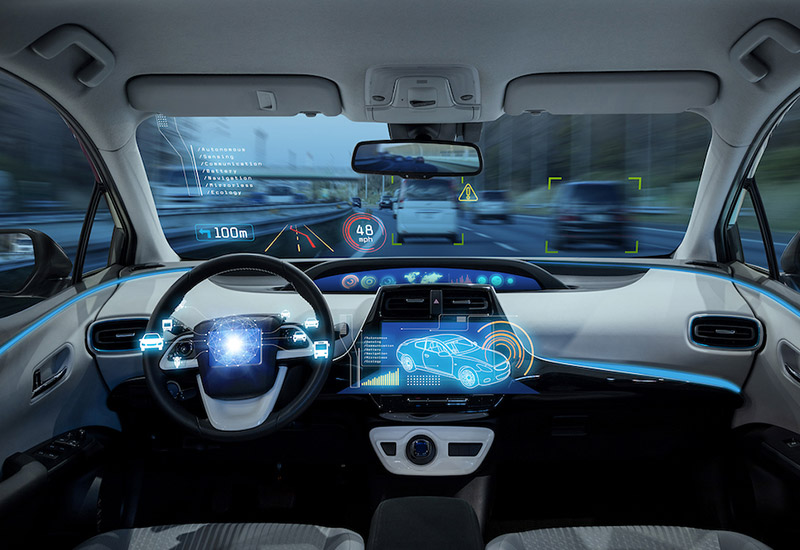

Most automotive companies are embedding features that make their vehicles capable of sensing the environment and moving safely with little or no driver’s input. To this end, the embedded intelligent component must capture real-time images, process captured images/videos to identify the current status, make proper decisions on the current status, and execute corresponding action items.

Localization of Virtual Assistants and Infotainment Systems

Even though there is a popular belief that English is the world’s most-spoken language by total number of native speakers, that is not true. In fact, Mandarin is the first and Spanish is the second, followed by West Germanic tongues, Hindi, Arabic, Portuguese, Bengali, and Russian. This is why voice interaction systems in cars should be available in various languages, and not only in English. Being able to speak in their native language enhances the user’s experience and has positive impacts in terms of safety, as it does not require drivers to think too much by translating their intent into the necessary language. However, localizing these systems in all different languages is not an easy job, and it requires a whole adaptation of natural language understanding (NLU) and natural language generation (NLG), as well as other components of the virtual assistant.

Text-to-Speech Tuning

Speech synthesis technology has recently become a must in the automotive industry because it improves the driver’s experience and increases security. Drivers are now able to read notifications, receive directions, or interact with the vehicle’s features while keeping their eyes on the road. To make this interaction as natural as possible, the speech synthesizer must be perfectly tuned.

Speech-to-Text Transcription

Speech recognition is already seen as a commodity feature inside vehicles. Different commands related to the car infotainment system, navigation, and audio devices can be done 100% via voice. In the automotive sector, the biggest challenge to making these systems work properly is the specific acoustic environment found in cars.

Development and Optimization of Natural Language Understanding

NLU enables human-computer interaction (HCI), which makes it possible for computers to infer what you actually mean when you speak a command, rather than only capturing the words you say. This allows you to have a more natural conversation because you aren’t limited to only one way of making a specific command. You simply speak the command in your everyday language and the virtual assistant should understand it. Most common ways users ask for services in your line of business and define your voice search optimization strategy.

Transcription of System Responses

In-car voice assistants simulate human interaction, and the only way to make that possible is by building a unique persona. Specific tone, cultural references, politeness, and other items must be treated carefully when adapting to other languages in order to avoid misunderstandings or uncomfortable reactions. The process of adapting both the responses and cultural content is called “transcreation,” and the voice assistant takes these elements into account.

In-Lab or In-Vehicle Testing

Automotive original equipment manufacturers (OEMs) and suppliers are always challenged to provide in-vehicle features that are exciting to their customers. Testing these dynamic systems can be a difficult task, but it is a crucial step and can define whether your product release is successful or a total failure.

User Studies

When in-lab and functional testing is over, it’s time to evaluate user experience with real users in real environments, with a large, diverse, and targeted group of testers. What do drivers and passengers say about their experience using your new version or product while driving at different speeds or being stationary, and with the windows closed or open?